Thinking Fast and Slow Summary | Daniel Kahneman

1-Sentence Summary of Thinking Fast and Slow

In the book Thinking, Fast and Slow, Daniel Kahneman discusses two systems of thinking: System 1 and System 2 where System 1 thinking is fast, automatic, and intuitive, while System 2 thinking is slow, deliberate, and analytical.

Life gets busy. Has Thinking Fast and Slow by Daniel Kahneman been gathering dust on your bookshelf? Instead, pick up the key ideas now.

We’re scratching the surface in this Thinking Fast and Slow summary. If you don’t already have the book, order it here or get the audiobook for free on Amazon to learn the juicy details.

About Daniel Kahneman

Daniel Kahneman is Professor of Psychology and Public Affairs Emeritus at the Princeton School of Public and International Affairs, the Eugene Higgins Professor of Psychology Emeritus at Princeton University, and a fellow of the Center for Rationality at the Hebrew University in Jerusalem. Dr. Kahneman is a member of the National Academy of Science, the Philosophical Society, and the American Academy of Arts and Sciences. He is also a fellow of the American Psychological Association, the American Psychological Society, the Society of Experimental Psychologists, and the Econometric Society. In 2015, The Economist listed him as the seventh most influential economist in the world. In 2002, Kahneman was also awarded a Nobel Prize in Economic Sciences.

Listen to The Audiobook Summary of Thinking Fast and Slow

Synopsis

Thinking, Fast and Slow provides an outline of the two most common approaches our brains utilize. Like a computer, our brain is built of systems. System 1 is fast, intuitive, and emotional. Daniel Kahneman encourages us to move away from our reliance on this system. System 1 is the most common source of mistakes and stagnation. In comparison, system 2 is a slower, more deliberate, and logical thought process. Kahneman recommends tapping into this system more frequently. As well as this advice, Kahneman provides guidance on how and why we make our decisions.

StoryShot #1: System 1 Is Innate

There are two systems associated with our thought processes. For each system, Kahneman outlines the primary functions and the decision making processes associated with each system.

System 1 includes all capabilities that are innate and generally shared with similar creatures within the animal kingdom. For example, each of us is born with an innate ability to recognize objects, orient our attention to important stimuli, and fear things linked to death or disease. System 1 also deals with mental activities that have become near-innate by becoming faster and more automatic. These activities generally move into system 1 because of prolonged practice. Certain pieces of knowledge will be automatic for you. For example, you do not even have to think about what the capital of England is. Over time, you have built an automatic association with the question, ‘What is the capital of England?’ As well as intuitive knowledge, system 1 also deals with learned skills, such as reading a book, riding a bike and how to act in common social situations.

There are also certain actions that are generally in system 1 but can also fall into system 2. This overlap occurs if you are making a deliberate effort to engage with that action. For example, chewing will generally fall into system 1. That said, suppose you become aware that you should be chewing your food more than you had been. In that case, some of your chewing behaviors will be shifted into the effortful system 2.

Attention is often associated with both systems 1 and 2. They work in tandem. For example, system 1 will be driving your immediate involuntary reaction to a loud sound. Your system 2 will then take over and offer voluntary attention to this sound and logical reasoning about the sound’s cause.

System 1 is a filter by which you interpret your experiences. It is the system you use for making intuitive decisions. So, it is undoubtedly the oldest brain system as it is evolutionarily primitive. System 1 is also unconscious and impulse-driven. Although you might feel system 1 is not having a significant impact on your life, it influences many of your choices and judgments.

StoryShot #2: System 2 Can Control Parts of System 1

System 2 comprises a range of activities. But each of these activities requires attention and is disrupted when attention is drawn away. Without attention, your performance in these activities will diminish. Significantly, system 2 can change the way system 1 works. For example, detection is generally an act of system 1. You can set yourself, via system 2, to search for a specific person in a crowd. This priming by system 2 allows your system 1 to perform better, meaning you are more likely to find the specific person in the crowd. This is the same process we utilize when we are completing a word search.

Because system 2 activities require attention, they are generally more effortful than system 1 activities. It is also challenging to simultaneously carry out more than one system 2 activity. The only tasks that can be simultaneously completed fall on the lower limits of effort; for example, holding a conversation while driving. That said, it is not wise to hold a conversation while overtaking a truck on a narrow road. Essentially, the more attention a task requires, the less viable it is to be completing another system 2 task simultaneously.

System 2 is younger, having developed in the last several thousand years. System 2 has become increasingly important as we adapt to modernization and shifting priorities. Most of the second system’s operations require conscious attention, such as giving someone your phone number. The operations of system 2 are often associated with the subjective experience of agency, choice, and concentration. When we think of ourselves, we identify with System 2. It is the conscious, reasoning self that has beliefs, makes choices, and decides what to think about and what to do.

StoryShot #3: The Two Systems Support Each Other

Based on the two systems’ descriptions, it could become easy to imagine that the systems occur one after the other. Kahneman explains that these two systems are actually integrated and mutually supportive. So, almost all tasks are a mix of both systems and are complementary. For example, emotions (system 1) are crucial in adopting logical reasoning (system 2). Emotions make our decision-making more meaningful and effective.

Another example of the two systems working in tandem is when we are playing sport. Certain parts of the sport will be automatic actions. Consider a game of tennis. Tennis will utilize running, which is an innate skill in humans and is controlled by system 1. Hitting a ball can also become a system 1 activity through practice. That said, there will always be specific strokes or tactical decisions that will require your system 2. So, both systems are complementary to each other as you play a sport, such as tennis.

Issues can arise when people over-rely on their system 1, as it requires less effort. Additional issues are associated with activities that are out of your routine. This is when systems 1 and 2 will become conflicted.

StoryShot #4: Heuristics As Mental Shortcuts

The second part of the book introduces the concept of heuristics. Heuristics are mental shortcuts we create as we make decisions. We are always seeking to solve problems with the greatest efficiency. So, heuristics are highly beneficial for conserving energy throughout our everyday lives. For example, our heuristics help us to automatically apply previous knowledge to slightly different circumstances. Although heuristics can be positive, it is also essential to consider that heuristics are the source of prejudice. For example, you may have one negative experience with a person from a specific ethnic group. If you rely solely on your heuristics, you might stereotype other people from the same ethnic group. Heuristics can also cause cognitive biases, systemic errors in thinking, bad decisions, or misinterpretation of events.

StoryShot #5: The Biases We Create in Our Own Minds

Kahneman introduces eight common biases and heuristics that can lead to poor decision making:

- The law of small numbers: This law shows our strongly biased belief of smaller numbers or samples resembling the population from which they come. People underestimate the variability in small samples. To put it another way, people overestimate what a small study can achieve. Let’s say a drug is successful in 80% of patients. How many patients will respond if five are treated? In reality, out of a sample of 5, there’s only a 41% chance that exactly four people will respond.

- Anchoring: When people make choices, they tend to depend more heavily on pre-existing information or the first information they come across. This is known as anchoring bias. If you first see a T-shirt that costs $1,200 and then see a second one that costs $100, you’re more likely to dismiss the second shirt. If you just saw the second shirt, which costs $100, you wouldn’t consider it cheap. The anchor – the first price you saw – had an undue impact on your decision.

- Priming: Our minds work by making associations between words and items. Therefore, we are susceptible to priming. A common association can be invoked by anything and lead us in a particular direction with our decisions. Kahneman explains that priming is the basis for nudges and advertising using positive imagery. For example, Nike primes for feelings of exercise and achievement. When starting a new sport or wanting to maintain their fitness, consumers are likely to think of Nike products. Nike supports pro athletes and uses slogans like “Just Do It” to demonstrate the athletes’ success and perseverance. Here’s another example: A restaurant owner that has too much Italian wine in stock, can prime their customers to buy this sort of wine by playing Italian music in the background.

- Cognitive ease: Whatever is easier for System 2 is more likely to be believed. Ease arises from idea repetition, clear display, a primed idea, and even one’s own good mood. It turns out that even the repetition of a falsehood can lead people to accept it, despite knowing it’s untrue, since the concept becomes familiar and is cognitively easy to process. An example of this would be an individual who is surrounded by people who believe and talk about a piece of fake news. Although evidence suggests this idea is false, the ease of processing this idea now makes believing it far easier.

- Jumping to conclusions: Kahneman suggests that our system 1 is a machine that works by jumping to conclusions. These conclusions are based on ‘What you see is all there is.’ In effect, system 1 draws conclusions based on readily available and sometimes misleading information. Once these conclusions are made we believe in them to the very end. The measured impact of halo effects, confirmation bias, framing effects, and base-rate neglect are aspects of jumping to conclusions in practice.

- The halo effect is when you attribute more positive features to a person/thing based on one positive impression. For example, believing a person is more intelligent than they actually are because they are beautiful.

- Confirmation bias occurs when you have a certain belief and seek out information that supports this belief. You also ignore information that challenges this belief. For example, a detective may identify a suspect early in the case but may only seek confirming instead of disproving evidence. Filter bubbles or “algorithmic editing” amplify confirmation bias in social media. The algorithms accomplish this by showing the user only information and posts they will likely agree with rather than exposing them to opposing perspectives.

- Framing effects relate to how the context of a dilemma can influence people’s behavior. For example, people tend to avoid risk when a positive frame is presented and seek risk when a negative frame is presented. In one study, when a late registration penalty was introduced, 93% of PhD students registered early. But the percentage declined to 67% when it was presented as a discount for early registration.

- Finally, base-rate neglect or base-rate fallacy relates to our tendency to focus on individuating information rather than base-rate information. Individuating information is specific to a certain person or event. Base rate information is objective, statistical information. We tend to assign greater value to the specific information and often ignore the base rate information altogether. So, we are more likely to make assumptions based on individual characteristics rather than the prevalence of something in general. The false-positive paradox is an example of the base rate fallacy. There are cases where there are a larger number of false positives than true positives. For example, 100 out of 1,000 people test positive for an infection, but only 20 actually have the infection. This suggests 80 tests were false positives. The probability of positive results depends on several factors, including testing accuracy as well as the characteristic of the sampled population. The prevalence, meaning the proportion of those who have a given condition can be lower than the test’s false positive rate. In such a situation, even tests that have a very low chance of producing a false positive in an individual case will give more false positives than true positives overall. Here’s another example: Even if the one person in your Chemistry elective course looks and acts like a traditional medical student, the chances that they are studying medicine are slim. This is because medical programs usually have only 100 or so students, compared to the thousands of students enrolled in other faculties such as Business or Engineering. While it might be easy to make snap judgments about people based on specific information, we shouldn’t allow this to completely erase the baseline statistical information.

- Availability: The bias of availability occurs when we take into account a salient event, a recent experience, or something that’s particularly vivid to us, to make our judgments. People who are guided by System 1 are more susceptible to the Availability bias than others. An example of this bias would be listening to the news and hearing there has been a large plane crash in another country. If you had a flight the following week, you could have a disproportionate belief that your flight will also crash.

- The Sunk-Cost Fallacy: This fallacy occurs when people continue to invest additional resources into a losing account despite better investments being available. For example, when investors allow the purchase price of a stock to determine when they can sell, they fall prey to the sunk cost fallacy. Investors’ inclination for selling winning stocks too early while holding on to losing stocks for far too long has been well-researched. Another example is staying in a long-term relationship despite it being emotionally damaging. They fear starting over because it means everything they did in the past was all for nothing, but this fear is usually more destructive than letting go. This fallacy is also the reason that people become addicted to gambling. To tackle this fallacy you should avoid the escalation of commitment to something that could fail.

StoryShot #6: Regression to the Mean

Regression to the mean is the statistical fact that any sequence of trials will eventually converge to the mean. Despite this, humans tend to identify lucky and unlucky streaks as a sign of future outcomes e.g. I have lost five slot machine pulls in a row, so I am due a win. This belief is associated with several mental shortcomings that Kahneman considers:

- Illusion of understanding: We construct narratives to make sense of the world. We look for causality where none exists.

- Illusion of validity: Pundits, stock pickers and other experts develop an outsized sense of expertise.

- Expert intuition: Algorithms applied with discipline often outdo experts and their sense of intuition.

- Planning fallacy: This fallacy occurs when people overestimate the positive outcomes of a chance-based experience because they planned for the occasion.

- Optimism and the Entrepreneurial Delusion: Most people are overconfident, tend to neglect competitors, and believe they will outperform the average.

StoryShot #7: Hindsight Significantly Influences Decision-Making

Using various elements, Daniel Kahneman shows how little of our past we understand. He mentions hindsight, a bias that has an especially negative effect on the decision making process. Specifically, hindsight shifts the measure used to assess the soundness of decisions. This shift moves the measure from the process itself to the nature of the outcome. Kahneman notes that actions that seemed prudent in foresight can look irresponsibly negligent in hindsight.

A general limitation of humans is our inability to accurately reconstruct past states of knowledge or beliefs that have changed. Hindsight bias has a significant impact on the evaluations of decision-makers. It leads observers to assess the quality of a decision not by whether the process was sound but by whether its outcome was good or bad.

Hindsight is especially unkind to decision-makers who act as agents for others: physicians, financial advisers, third-base coaches, CEOs, social workers, diplomats, and politicians. We are prone to blaming decision makers for good decisions that worked out badly. We also give them too little credit for successful actions that only appear evident after the outcomes. So, within humans, there is a clear outcome bias.

Although hindsight and the outcome bias generally foster risk aversion, they also bring undeserved rewards to irresponsible risk seekers. An example of this is entrepreneurs who take crazy gambles and luckily win. Lucky leaders are also never punished for having taken too much risk.

StoryShot #8: Risk Aversion

Kahneman notes that humans tend to be risk-averse, meaning we tend to avoid risk whenever we can. Most people dislike risk due to the potential of receiving the lowest possible outcome. So, if they are offered the choice between a gamble and an amount equal to its expected value, they will pick the sure thing. The expected value is calculated by multiplying each of the possible outcomes by the likelihood each outcome will occur and summing all of those values. A risk-averse decision-maker will choose a sure thing that is less than the expected value of the risk. In effect, they are paying a premium to avoid uncertainty.

StoryShot #9: Loss Aversion

Kahneman also introduces the concept of loss aversion. Many options we face in life are a mixture of potential loss and gain. There is a risk of loss and an opportunity for gain. So, we must decide whether to accept the gamble or reject it.

Loss aversion refers to the relative strength of two motives: we are driven more strongly to avoid losses than achieve gains. A reference point is sometimes the status quo, but it can also be a goal in the future. For example, not achieving a goal is a loss; exceeding the goal is a gain.

The two motives are not equally powerful. Failure aversion is far stronger than the motivation to obtain a goal. So, people often adopt short-term goals that they strive to achieve but not necessarily exceed. They are likely to reduce their efforts when they have reached immediate goals. This means their results can sometimes violate economic logic.

Kahneman also explains that people attach value to gains and losses rather than wealth. So, the decision weights that they assign to outcomes are different from probabilities. People who face terrible options take desperate gambles, accepting a high probability of making things worse in exchange for a small hope of avoiding a large loss. Risk-taking of this kind often turns manageable failures into disasters. Because defeat is so difficult to accept, the losing side in wars often fights long past the point that victory is guaranteed for the other side.

StoryShot #10: Do Not Trust Your Preferences to Reflect Your Interests

On decisions, Daniel Kahneman suggests that we all hold an assumption that our decisions are in our best interest. This is not usually the case. Our memories, which are not always right or interpreted correctly, often significantly influence our choices.

Decisions that do not produce the best possible experience are bad news for believers in the rationality of choice. We cannot fully trust our preferences to reflect our interests. This lack of trust is real, even if they are based on personal experience and recent memories.

StoryShot #11: Memories Shape Our Decisions

Memories shape our decisions. Worryingly, our memories can be wrong. Inconsistency is built into the design of our minds. We have strong preferences for the duration of our experiences of pain and pleasure. We want pain to be brief and pleasure to last. Our memory, a function of System 1, has evolved to represent the most intense moments of an episode of pain or pleasure. A memory that neglects duration will not serve our preference for long pleasures and short pains.

A single happiness value does not easily represent the experience of a moment or an episode. Although positive and negative emotions exist simultaneously, it is possible to classify most moments of life as ultimately positive or negative. An individual’s mood at any moment depends on their temperament and overall happiness. Still, emotional wellbeing also fluctuates daily and weekly. The mood of the moment depends primarily on the current situation.

Thinking Fast and Slow Summary and Review

Thinking, Fast and Slow outlines the way that all human minds work. We all have two systems that support each other and work in tandem. The issue is when we rely too heavily on our quick and impulsive system 1. This overreliance leads to a wide range of biases that can negatively influence decision making. The key is to understand where these biases come from and use our analytical system 2 to keep our system 1 in check.

Rating

We rate Thinking Fast and Slow 4.4/5. How would you rate Daniel Kahneman’s book based on this summary? Comment below and let us know!

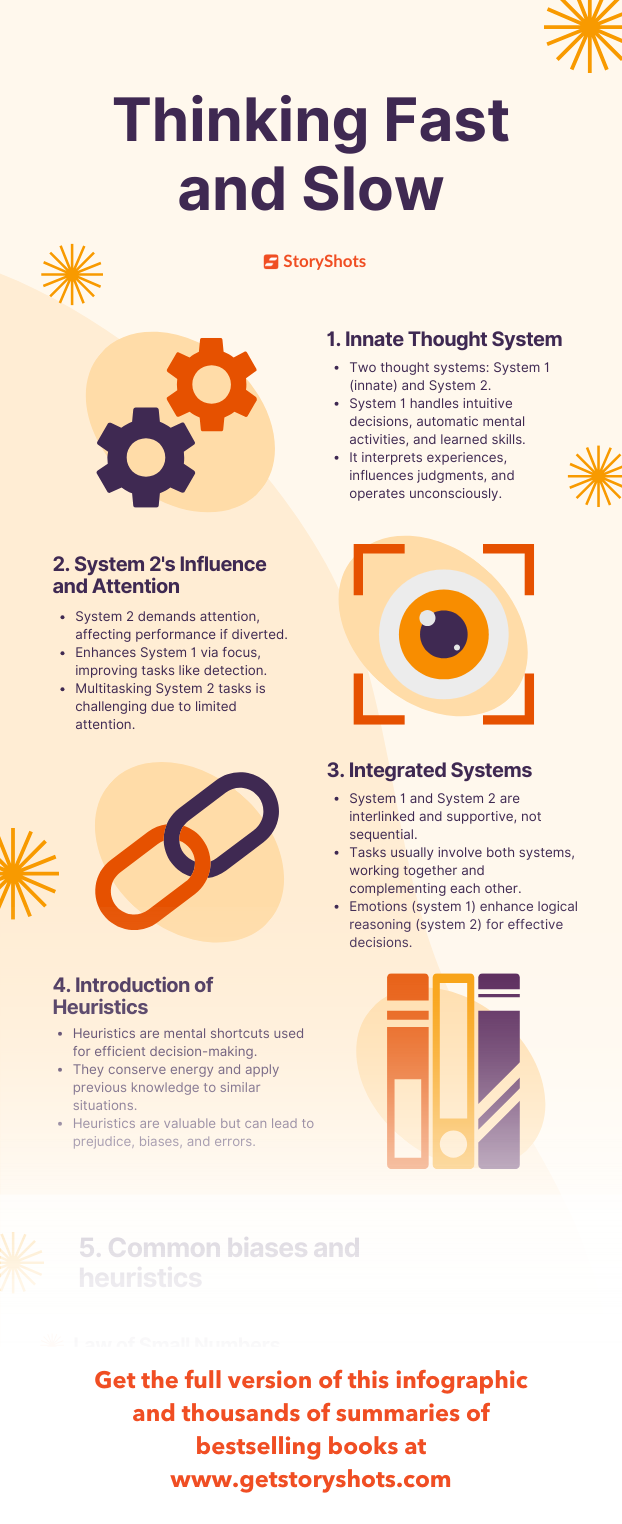

Infographic Summary

Get the full infographic summary of Thinking Fast and Slow on the StoryShots app.

Thinking Fast and Slow Summary PDF, Free Audiobook and Animated Summary

This was the tip of the iceberg. To dive into the details and support the author, order the book or get the audiobook for free on Amazon.

Did you like the lessons you learned here? Comment below or share to show you care.

New to StoryShots? Get the PDF, free audio and animated versions of this analysis and summary of Thinking Fast and Slow and hundreds of other bestselling nonfiction books in our free top-ranking app. It’s been featured by Apple, The Guardian, The UN, and Google as one of the world’s best reading and learning apps.

Related Free Book Summaries

Noise by Daniel Kahneman

Think Again by Adam Grant

Nudge by Richard Thaler

Predictably Irrational by Dan Ariely

Flow by Mihaly Csikszentmihalyi

Daring Greatly by Brené Brown

When by Daniel H. Pink

The Black Swan by Nassim Taleb

Everything is F*cked by Mark Manson

Six Thinking Hats by Edward De Bono

How Not to Be Wrong by Jordan Ellenberg

Talking to Strangers by Malcolm Gladwell

Tao Te Ching by Laozi

Moonwalking with Einstein by Joshua Foer

Freakonomics by Stephen Dubner and Steven Levitt

The Laws of Human Nature by Robert Greene

One Comment